In today’s eCommerce world, A/B testing is a vital strategy element of any online retail business that aims to truly thrive in the highly competitive market.

In order to stay on top of their game retailers continuously look for ways to change, innovate and improve. And the way to do that is through...yes, you’ve guessed it - experimentation!

How else could you really identify what works for your business and what doesn’t?

Today, we’re happy to share we’ve added new functionality to our stack, that will enable experimentation across your ad campaigns: A/B Testing Product Titles. From now on, you can easily find your own optimum and set-up your campaigns for success.

A/B Testing in Data Feeds - why is it important?

The product feed data is a foundation of every shopping campaign. This means that making significant changes across your feed can make or break your product listings.

The feed should not be a cold set of data you have to provide a channel with in order to be eligible to serve ads. Sure, there is a list of requirements you need to stick to, but the optimization journey does not end there. On the contrary - that’s where it begins.

Every advertiser wants their ads to be successful and convert. But how can you ensure that your ads drive actual customers to your store, rather than serve for empty impressions?

The answer is simple: Always be testing.

Take the guesswork out of the equation and find out what your customers respond to. Then take those insights & apply them to your feeds.

Titles, being one of the most remarkable parts of your ad are a great place to start with A/B testing. Especially with the right tool at hand like DataFeedWatch for Google Shopping, it becomes a simple, yet very powerful, tactic!

A/B Testing Product Titles in DataFeedWatch

Excited to design your first A/B test? Great!

Time to get to the pragmatic level and learn how to incorporate experimentation into your feed optimization strategy.

Let’s take it one step at a time:

How does it work?

First and foremost, the new functionality enables you to simultaneously run 2 different versions of titles across your product portfolio. And then, compare the performance data sets in a clear overview.

This opens a window of opportunity for the Retailers to easily discover the perfect title configuration and make strategic adjustments across their feeds.

Before we get hands-on with the functionality, let’s understand the mechanism:

- Tracking: for the A/B test to make sense, you need a method for collecting performance data on each. We achieve this by automatically appending a tracking parameter to the product link.

- Channels: A/B test for titles is available across all channel feeds that contain product URLs (see point above). Google Shopping, Facebook, Instagram, Google Search Ads are just a few examples. For eBay or Amazon feeds the experimental feature is not available.

- Distribution: only one title version can be assigned to each item ID. We distribute the A-title and the B-title evenly between all products. Therefore, the Variant A will be assigned to the products 00001, 00003, 00005, etc. and the Variant B to the products 00002, 00004, 00006, etc.

The results are based on a group of products that have been assigned to a version A or B.

Steps to set up an A/B test in DataFeedWatch

1. Map your IDs

In order for the feature to be available in your channel feeds, you need to ensure that ‘ID’ field in the Master Fields section is mapped. Skip this step if you have already completed this part during the store set-up.

Otherwise, find the Master Fields panel in the side navigation bar and fill in the required attribute:

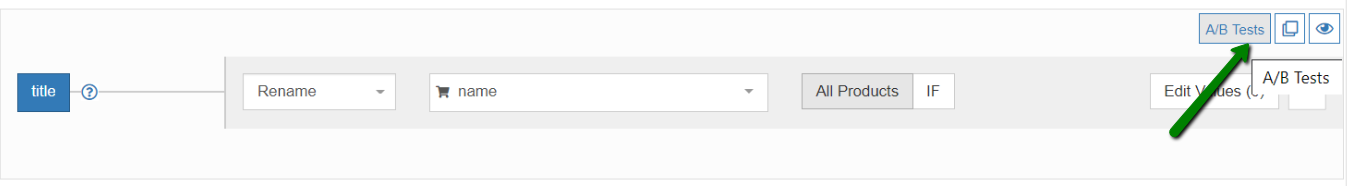

2. Enable Title Split Up

Proceed to the mapping panel of the channel of your choice (‘Edit Feed’) and find the A/B test button in the top right corner of the title section:

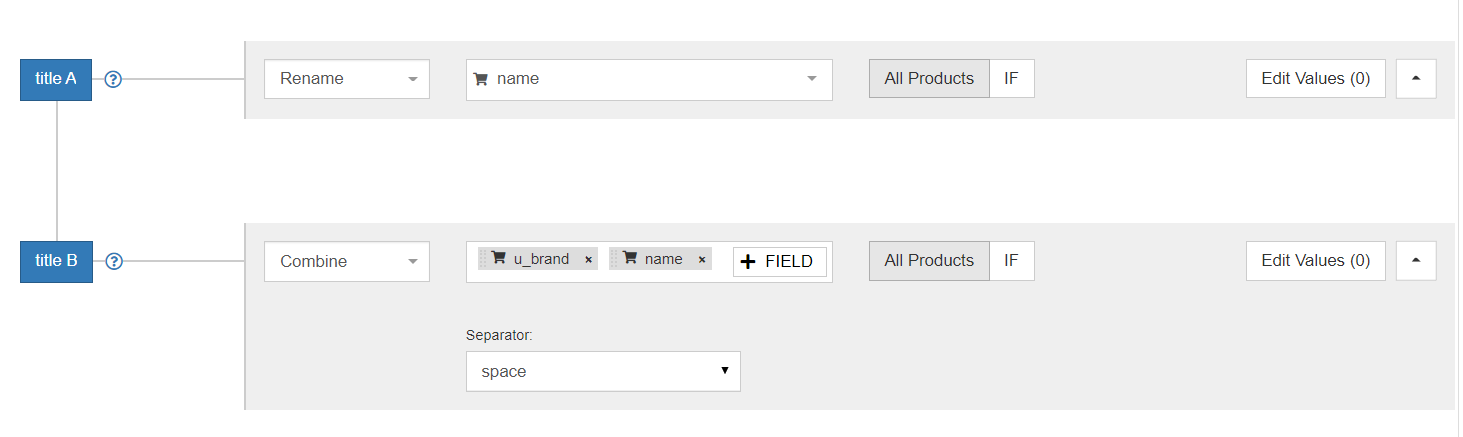

3. Set-up version A and version B of your product titles

Determine the variable that you’d like to put to the test.

Then, create the desired structure for each title version. The set-up part works in the exact same way as for all other feed attributes. You could map it to a specific field from your store, combine attributes or even upload customized titles from a spreadsheet for one of the versions.

There are no limits in terms of modification options. So, step into your customer’s mindset and get creative!

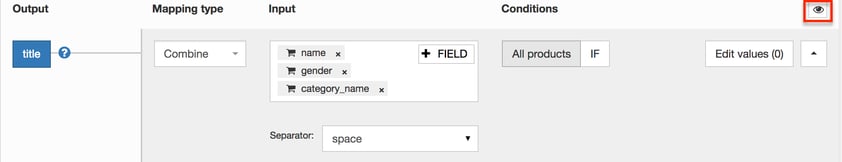

4. Preview and Save Changes

Once you’re all set-up with the new title structure to test, take a quick glance into the preview (eye icon in the top right corner). Each title version has a separate preview.

If you’re satisfied with the new order - save the changes and your feed will get updated.

Note: the preview is insensitive to the A - B distribution. This means that you can see the title for item X in both A-preview and B-preview. In the output feed - only one title version will be assigned to each item ID.

Once saved, you can check the ‘Show Feed’ section to review the distribution of each title version per item.

Analyze and select a winner

A thorough analysis is an indispensable element of every successful experiment. So, how can we track and measure the results?

We’ve added a link parameter to track the performance of the two title versions. This way you can easily check the performance of your titles at any time in Google Analytics.

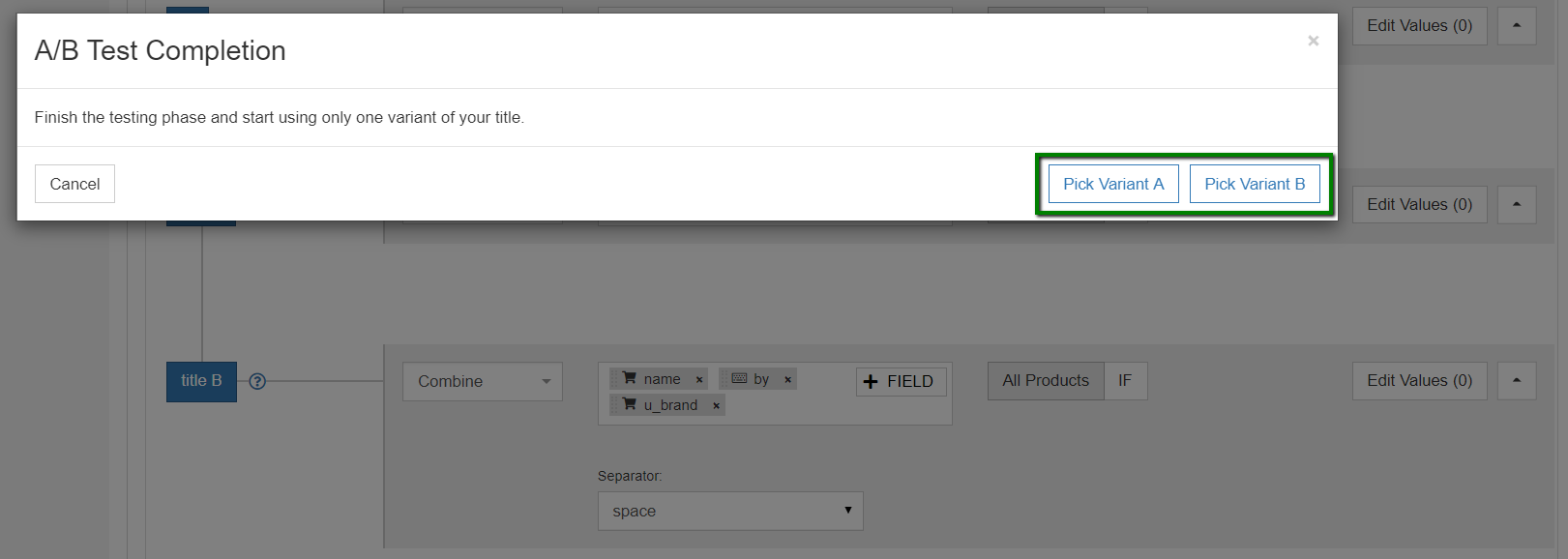

Once you’ve collected enough data to pick your winner - simply go back to the feed settings in DataFeedWatch (‘Edit Feed’) and tap on ‘A/B test button’ once more to confirm the winning version.

Voilà! The new title structure will be applied to all products within the feed.

A/B Testing Best Practices

Every shop is different and should be approached individually when incorporating experiments into the strategy.

Having said that, there are some rules that are good to go by regardless of your vertical, territory or market trends. Let’s take a look at the most important DOs and DON’Ts for conducting successful A/B experiments:

1. Decide on the duration and size

To ensure that the AB test results are accurate and relevant you need to allow the experiment to run for a sufficient time and on a sufficient number of products.

- For defining the test duration you could use a time unit, eg. 1 to 2 weeks, or tie it with a specific performance metric - for instance until you reach 100 clicks or conversions

- As for the size: conclusions are usually easier to draw if the results are coming from a larger sample. We recommend using it with 100 products or more.

2. Test only one variable at a time

This one also goes back to the results of the A/B test. If you want to obtain accurate data and really measure the impact of a specific change, you need to limit the other factors.

Testing multiple variables at once won’t give you a clear overview of how each change influenced the performance of your shopping ads.

3. Define the key success metric

Are you going for greater CTR? Or maybe what matters is the number of conversions?

The awareness of your goal is crucial to the interpretation of the AB test results and will make it easy to select the winner as you conclude the test.

4. Always be testing

You want your ads to thrive and stay ahead of competitors in the long run?

If so, you need to make a habit of experimenting and continuously identify new ways of capturing the attention of the buyers. Don’t stop at just one test.

Your ads’ performance might be influenced by many factors. Add the everchanging eCommerce market to that and the possibilities for testing become infinite. Still, keep in mind rule #2!

Practical Examples - where to start?

Coming up with ‘what to test?’ in your titles may not be the easiest task at hand, especially if you’re about to take your 1st attempt.

Analyzing your current title structure and comparing it to the recommended practices for titles is a place to start. You can learn more about the optimal title structure for Shopping Ads in our other article.

Another idea could be to look at the titles of your best-selling products and those with poor performance to try to form a hypothesis that will become a base for your test.

To make this easier, we’ve put together a quick list with ideas for A/B testing titles:

- Position - experiment with the placement of a specific attribute, for example, Brand name at the front vs at the end of a title

- Keep or Toss - there are many product attributes that might be relevant from the customer’s perspective, but what is their actual impact on your ads? Examples: color, size, material etc.

- Synonyms - find words that really speak to your audience and are well aligned with their culture, eg. While in the US, are you gonna say 'grill' or 'BBQ'?

- Abbreviations - do you have words in your titles that are commonly shortened to abbreviations? It could be your Brand name or any one of the attributes.

- Length - what kind of titles work best for your audience: very short or maybe more descriptive? (Related: Google Shopping Title Allowed Length)

Conclusions

In the extremely dynamic environment of online advertising A/B experimentation plays a crucial role in keeping up with the pace of change and refining our approach to best suit your customers’ needs.

We’re very happy to roll out the new A/B testing functionality, that we hope, will help your business grow at a faster pace and continuously identify new improvement opportunities.

So, what’s more to say than ‘let's get this rollin’? 😉